Alan Turing was born a hundred years ago this year and his most important paper was published seventy-six years ago (November 1936). It is close to impossible to overstate the influence that Turing has had on the modern world. It is something well worth celebrating his life throughout this centennial year. Although any celebration must be tempered by reflection on the circumstances of his death, I would very much like to tell him that “it got better.”

Since others with better knowledge of history than I are writing this year about many aspects of Turing’s life and influence, I’ll discuss something that doesn’t make the rounds often: Alan Turing and randomness. Turing knew a great deal about randomness and talked about what kinds of things can and can’t be computed. If you would like to know why true randomness isn’t “computable”, please read on. Discussion of randomness in 1Password and cryptography will be in a later article.

The Millennium of the Algorithm

I like to call the second millennium “the millennium of the algorithm”. An algorithm is a finite list of step-by-step instructions that, if followed, will get you a result. When we learned how to add multi-digit numbers in grade school, we learned an algorithm. The same is true for multiplication and division. These algorithms for arithmetic were developed and described by Muḥammad ibn Mūsā al-Khwārizmī in about 825CE and translated to Latin in the 12th century. It is from al-Khwārizmī’s name that we get the word “algorithm”. We also get the word “algebra” from the title of one of his books.

In the final century of the millennium, Alan Turing found a way to treat algorithms as mathematical objects. This involved inventing a computer programming language and describing a physical machine that it would run on. The particular machine wouldn’t be very practical because Turing needed it to be the simplest thing possible that would compute, in principle, anything that a more complicated physical device could do. It would have been horrendously inefficient if actually built.

LEGO Turing Machine from ecalpemos on Vimeo

The full title of Turing’s 1936 paper is “On computable numbers, with an application to the Entscheidungsproblem“, but I will only be talking about the “On computable numbers”. Although utterly fascinating and the goal of his paper, let’s set aside the Entscheidungsproblem for another post or perhaps a few beers.

Different kinds of numbers

There are lots of numbers between 0 and 1. We call all the numbers in that range real numbers. Among the real numbers are rational numbers (numbers that are a fraction of whole numbers) like 1/3, but there are also numbers that can’t be expressed as fractions of whole numbers, like the square root of 2. This fact about the square root of two was so disturbing to the Pythagoreans view of the universe that they attempted to keep it secret. Legend has it that the person who revealed the secret got tossed overboard and drowned as punishment. Anyway, numbers that can’t be expressed as ratios of whole numbers are called irrational numbers.

It turns out that there are as many even numbers as there are whole numbers. This is because for any whole number that you care to name, I can name an even number that matches it. If you say “33”, I’ll just say “66”. This may seem strange, but if we can find a way to pair up the members of one set (in this case whole numbers) with the members of another set (in this case even numbers), we say the sets are the same size. It actually gives us a useful way to talk about infinities.

Since there are as many whole numbers as even numbers, you might reasonably think at this point that all infinities are the same. You’d be wrong, but it certainly wouldn’t be unreasonable to have that incorrect intuition. There are, in fact, more real (irrational) numbers than there are rational numbers. The proof of this is one of the most beautiful things ever invented, but I won’t go into it.

So we’ve got a different, bigger infinity of irrational numbers then we have of rational numbers. When a set has as many elements as there are counting numbers, we call that set “countable”. It’s infinite, but we can match up elements to the counting numbers. The counting numbers are countable as are the rational numbers. The set of real numbers, however, is not countable. This means that we cannot pair up each real number with some counting number.

How irrational can we get?

There are different types of irrational numbers. Things like the square root of 2 are called algebraic numbers for reasons I won’t explain. But there are irrational numbers that go beyond, or transcend, the algebraic numbers, and these are called transcendental numbers. The most famous transcendental number is π, the ratio of the circumference of a circle to its diameter. Of course, π has been known about for a very long time, but it was only proved to be transcendental in the 19th century. The trigonometric functions like sine and cosine typically yield transcendental results.

There are algorithms to compute any algebraic numbers (things like the square root of 2) to any precision that we need. There are also algorithms to calculate the kinds of transcendental numbers that we tend to use, like π or the sine of 10.

On Computable Numbers

Imagine a hotel – let’s call in the Hilbert Hotel – that has a countably infinite number of rooms, each of which is big enough for only one guest. Suppose you have every room filled, and a countably infinite number of new guests arrive. You can still fit them all in by having each current guest move to double their current room number. If Alice is staying in room 1, she will move to room 2. Barbara, who has been in room 2, will be moving to room 4. The guest in room 33 will be moving to room 66. After this move, then all of the odd numbered rooms will be vacant, and you can then give out those rooms to your new guest.

If you have an infinite number of guests who would like to stay, and you can find a way to assign each to her own room, then you have countably infinite guests. But if there is no way to give each guest her own room, then you have an uncountable number of guests.

By carefully defining what it means to compute something, Turing found a way to give every possible algorithm a room to itself in the Hilbert’s countably infinite hotel. This means that there are “only” a countable number of algorithms. At the same time, we know that there are uncountably many real numbers. There are real numbers for which there is no algorithm to produce it. Some things are uncomputable.

Plenty of irrational numbers are computable. We do have algorithms for computing the square root of 2 or the sine of 50. Turing’s result here (remember, he actually just used all of this as a building block to settle a bigger question in mathematics) tells us, among other things, that that while there are countably infinite things that we can compute, there are uncountable infinite things that we can’t compute. The overwhelming portion of numbers are things that we can’t compute.

Computing Machines

Turing was able to define the notion of “computable” by imagining the most simple device with the most simple of mechanisms that could do everything that we think of as computation. But, there is some confusion about the term “Turing Machine”. Although it can be used to refer to the imaginary physical device described in “On Computable Numbers”, it often refers to a program for that device (Turing called his programs “machines”). There is a special kind of Turing Machine (program) which can read and execute any Turing Machine. We call such programs or systems Universal (Turing) Machine.

One important fact about a Turing Machine (the imaginary device or individual programs) is that it always produced the same result with the same input. The result of each step in an algorithm depends entirely on what it has to work with and the step itself. If an algorithm has a step that adds two digits the results must always be the same given the same starting digits. Algorithms cannot have steps in them like “flip a coin, if it comes up heads do X, otherwise do Y.” There is no coin flipper inside a Turing Machine.

Pick a number, any number

If we pick a number at random from the real numbers between 0 and 1, it will almost certainly be a non-computable number, as the overwhelming majority of numbers aren’t computable. So can we pick a random number that way? Not with a Turing Machine. Each step in an algorithm should get you to a single specific result based on where you start. True randomness is not computable.

Turing knew that Turing machines could not generate true randomness, and Turing knew a great deal about randomness. His 1934 King’s College (Cambridge) Fellowship Dissertation had been on one of the most fundamental theorems in statistics. His now famous work as a code breaker at Bletchley Park during the second world war involved an innovative application of Bayes’ Rule to cryptanalysis.

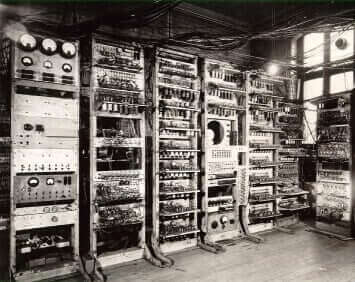

When Turing got to play with real early computers for his own academic interests, he knew that if he wanted anything with truly random numbers, it would have to go beyond the computable. He had a hardware random number generated included in the design of the Mark I at Manchester University in 1949. A paper by Martin Campbell-Kelly describes what was almost certainly the first attempt at a hardware random number generator in an electronic computer:

At the request of Turing an instruction to generate a random number from a noise source was provided. Unfortunately, the numbers turned out to be not particularly random, and debugging was difficult because programmes were not repeatable; consequently, the instruction eventually fell into disuse.

The lesson in all of this history and theory is that randomness has been a difficult problem since the very beginnings of computing.

More randomness to come

1Password, like pretty much all cryptographic software, needs cryptographically secure random numbers to do its stuff securely. What it means for a number to be cryptographically secure, why 1Password needs such numbers, and where it gets those from will be the subject of a future article.

Further reading

If you would like to look to the past then I very strongly recommend Andrew Hodges’ Alan Turing: the enigma as the definitive biography, and for a remarkably accessible yet complete discussion of On Computable Numbers, I enthusiastically recommend Charles Petzold’s The Annotated Turing.

by Jeffrey Goldberg on

by Jeffrey Goldberg on